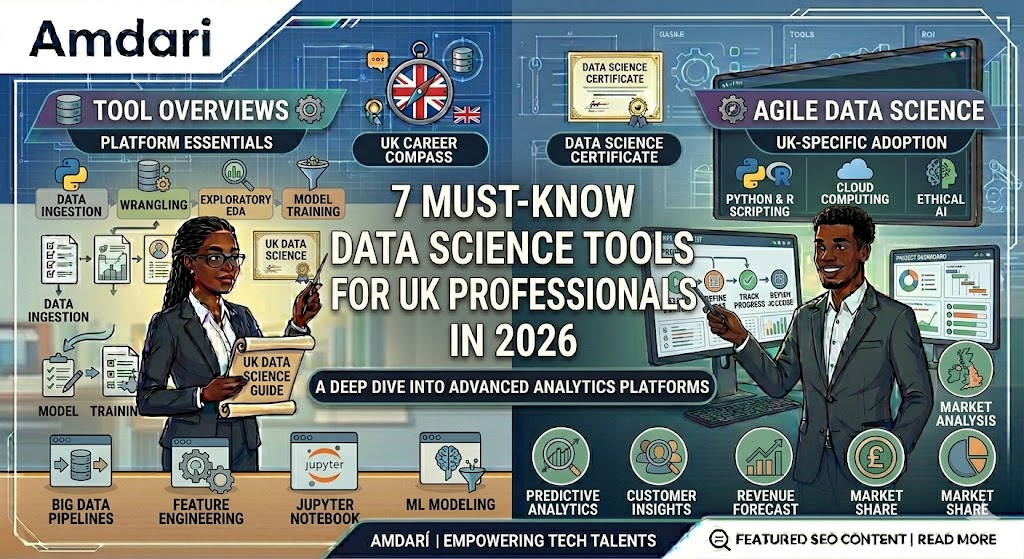

Important things to know

The UK data science market in 2026 is no longer rewarding professionals who only know how to build a model in isolation. It is rewarding people who can move from raw data to business impact while working inside stricter governance, faster AI adoption cycles, and higher commercial expectations.

The direction of travel is clear. The UK government’s AI Labour Market Survey 2025 found that 97% of respondents identified at least one AI skills gap, 57% reported a technical skills gap, and 35% were struggling to fill AI roles. Meanwhile, the Office for National Statistics reported that 23% of UK businesses were using some form of AI technology in late September 2025, up from 9% in September 2023.

That matters because it changes what “must-know tools” means. In 2026, the most valuable data scientists in the UK are not just coding models. They are building reproducible workflows, collaborating across engineering and analytics, operationalising AI responsibly, and translating outputs into decisions that non-technical stakeholders can trust. The winning stack is no longer a loose collection of favorite tools. It is an operating model for modern data work.

So, if you want to position yourself as a serious practitioner rather than another CV in a crowded market, these are the seven tools you need to know.

1) Python: still the core language of modern data science

There is no credible 2026 data science toolkit without Python. Not because it is fashionable, but because it remains the clearest bridge between data analysis, machine learning, automation, and production-grade AI workflows.

For data professionals, Python is more than a language choice. It is a career hedge. Government research on AI skills links expert AI roles closely to combinations of data science, machine learning, and software development. In practice, that means Python remains the default language for professionals who need to sit between experimentation and implementation.

The real distinction in 2026 is not whether you “know Python.” It is whether you can use Python in a way that is maintainable, tested, collaborative, and audit-friendly. That is the difference between scripting and professional practice.

2) JupyterLab: where experimentation becomes communication

JupyterLab has grown far beyond its reputation as a notebook sandbox. Project Jupyter describes notebooks as documents that combine code, narrative, visualisation, and data, while JupyterLab is positioned as the next-generation interface for notebooks, code, and data. That makes it one of the most important communication surfaces in modern data science.

Why does that matter in the UK market? Because most organisations do not need more opaque technical work. They need clearer decision support. As AI adoption spreads across existing roles, the ability to explain assumptions, surface evidence, and make outputs understandable to non-specialists becomes a premium skill.

In practical terms, JupyterLab should be part of your workflow if you do exploratory analysis, feature engineering, model prototyping, experiment reporting, stakeholder walkthroughs, or lightweight reproducible research.

The mistake many teams still make is treating notebooks as throwaway scratchpads. The best teams treat them as first-class analytical assets: structured, versioned, documented, and connected to production pathways. That is when JupyterLab becomes strategic rather than convenient.

Many Africans struggle to land tech jobs because they do not have local work experience in the UK, US & Canada. This is why we built a low-risk work environment to help them build their portfolio working in the UK, US & Canada and increase their chances of landing jobs. This has been greatly helpful and many have shared their testimonies. To know how you can get started, book a free career consultation call with us here.

3) GitHub: because version control is now a baseline professional skill

If Python is your core language and JupyterLab is your interactive workspace, GitHub is your professional memory.

GitHub’s documentation makes the underlying case clearly: Git enables teams to track changes, recover earlier versions, and understand what changed, who changed it, and why. In data science, that is no longer optional. It is baseline operating hygiene.

For years, some data scientists got away with weak version control habits because they worked in isolated analysis environments. That no longer scales. As AI adoption grows, the commercial, compliance, and collaboration stakes around analytical work increase. ONS evidence also suggests organisations are often integrating AI by expanding the capabilities of existing teams, not just hiring entirely new specialist groups. That makes cross-functional collaboration more important, not less.

In 2026, GitHub is more than a code host. It is where strong teams manage documentation, issues, pull requests, automation, and increasingly AI-assisted development. If you want to work like a thought leader, make your work reviewable, reproducible, and transparent.

4) dbt: the tool that turned data transformation into a disciplined practice

One of the most important changes in the modern data stack is the rise of analytics engineering. That is exactly why dbt matters.

dbt’s documentation frames the platform around transforming data directly in your cloud environment, while also supporting documentation, metadata, and lineage. Its newer documentation and catalog experiences continue to reinforce that emphasis on governed understanding of the data estate, not just raw transformation scripts.

This is not just a data engineering concern. It is a data science concern. Most weak models are not caused by elegant algorithms failing; they are caused by bad assumptions, inconsistent business definitions, and poorly governed upstream data.

dbt earns its place on this list because it teaches a professional lesson that many organisations still learn too late: better models start with better governed data. If you can write clean SQL transformations, define tests, document logic, and understand lineage, you become more than a modeller. You become someone who improves trust across the entire data estate.

5) Databricks: where data engineering, machine learning, and AI converge

Databricks is one of the clearest indicators of where enterprise data work is going. Its documentation describes the Databricks Data Intelligence Platform as enabling data analysts, data scientists, and data engineers to collaborate on analytics and AI in the lakehouse. Its architecture materials also describe an end-to-end platform covering ingestion, transformation, querying, processing, serving, analysis, and storage.

For UK professionals, the appeal is simple: the market increasingly rewards convergence. Employers do not want specialists who can only touch one isolated layer of the lifecycle. They want professionals who understand how experimentation connects to governed data, scalable compute, and delivery pathways.

That aligns with the wider UK skills picture. Government research points to ongoing skills gaps, greater reliance on practical implementation, and growing AI adoption across organisations. In that context, platforms that reduce friction between teams become more valuable.

Even if your company is not a full Databricks shop, understanding the Databricks model makes you stronger. It forces you to think in systems rather than tasks. That is increasingly how senior data leaders evaluate talent.

6) Snowflake: the warehouse is becoming an AI work surface

Snowflake should no longer be thought of as “just” a cloud data warehouse. Snowflake now frames its platform as the AI Data Cloud: a unified platform and connected ecosystem for organisations building, using, and sharing data, applications, and AI.

That strategic shift became even more visible in February 2026, when Snowflake announced a multi-year $200 million partnership with OpenAI to bring enterprise-ready AI more directly into its platform. Reuters reported that the move is designed to let customers work with company data through natural-language interfaces and AI-driven workflows inside Snowflake’s environment. Snowflake’s own announcement describes the deal as a co-innovation and go-to-market partnership to embed advanced AI into its trusted data platform.

For UK professionals, the lesson is not that you need to memorise every Snowflake feature. It is that you need to understand where enterprise architecture is heading. Storage, analytics, governance, and AI inference are no longer cleanly separated. They are being pulled together into a common work surface.

Professionals who understand that shift will be better prepared for real-world enterprise data science than those still thinking in legacy categories.

7) Power BI: because insight that is not adopted is not impact

A technically strong model that nobody uses has almost no business value. That is why Power BI belongs on this list.

Microsoft’s documentation places Power BI inside a broader Microsoft Fabric ecosystem and continues to expand Copilot-related capabilities around business-facing analytics workflows. Microsoft’s Fabric updates in February 2026 also highlighted broader availability of Copilot in Fabric, including Copilot for Power BI, and published new guidance on using machine learning to enrich Power BI reports. Microsoft’s Power BI AI instructions guidance also documents a 10,000-character limit for AI instructions, which is useful context for teams operationalising AI-assisted reporting.

In the UK, this matters because AI responsibilities are increasingly being added to existing roles rather than appearing only as new specialist job titles. Government research explicitly points to widespread integration of AI capabilities across the labour market, which makes business-facing tools even more important.

That is why Power BI is more than a dashboarding layer. It is the final mile of analytical credibility. Leaders trust what they can inspect, query, and use. If your work never escapes notebooks and presentations, your influence stays limited. Power BI helps close that gap.

What these seven tools say about data science in 2026

The bigger story is not the tools themselves. It is the pattern.

The 2026 data scientist in the UK is moving away from the stereotype of the lone modeller and toward a more integrated professional identity:

- Python for technical fluency

- JupyterLab for analytical storytelling

- GitHub for versioning and collaboration

- dbt for trustworthy transformed data

- Databricks for end-to-end analytics and AI workflows

- Snowflake for governed data-plus-AI execution

- Power BI for business adoption and decision activation

That stack reflects where the profession is heading. AI adoption is rising. Skills shortages remain real. Organisations are retraining staff while expecting faster delivery and stronger governance. The professionals who can connect experimentation, engineering discipline, data quality, and business communication will shape the next phase of the market.

The strategic takeaway for data professionals especially Africans in the UK, US & Canada

If you want to build authority in 2026, stop asking which single tool is “best.” That is the wrong question.

The right question is: which combination of tools makes me more credible across the full lifecycle of data and AI delivery?

That is how thought leaders think. They do not optimise for novelty. They optimise for relevance, reliability, and leverage. So yes, learn the libraries. Learn the syntax. Learn the platforms. But more importantly, learn how they fit together into a coherent operating model. In the global job market shaped by AI acceleration, skills gaps, and rising expectations for measurable impact, systems-level capability is what will separate leaders from practitioners who are still playing catch-up.

Want to see some projects that will improve your data science portfolio, check out some of the projects our data science work experience participants include in their portfolios. You can also take a free 1-minute job readiness test and see what you need to be doing right. Click here to take the test